![PDF] Assessing agreement between raters from the point of coefficients and loglinear models | Semantic Scholar PDF] Assessing agreement between raters from the point of coefficients and loglinear models | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/fd4ca609a164e6c43d2f6ad68a57b86313bc8af0/6-Table5-1.png)

PDF] Assessing agreement between raters from the point of coefficients and loglinear models | Semantic Scholar

Systematic literature reviews in software engineering—enhancement of the study selection process using Cohen's Kappa statistic - ScienceDirect

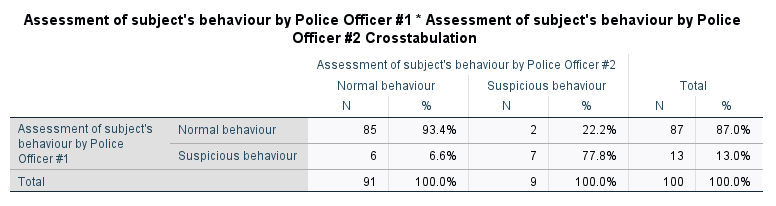

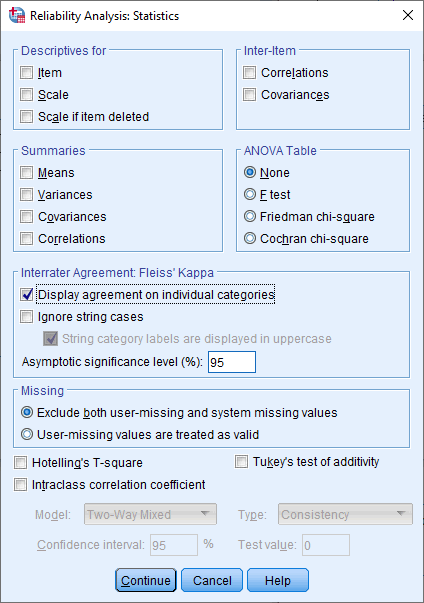

Cohen's kappa in SPSS Statistics - Procedure, output and interpretation of the output using a relevant example | Laerd Statistics

![Kappa Statistics and Strength of Agreement [44]. | Download Scientific Diagram Kappa Statistics and Strength of Agreement [44]. | Download Scientific Diagram](https://www.researchgate.net/publication/340998576/figure/tbl2/AS:888538270797824@1588855440209/Kappa-Statistics-and-Strength-of-Agreement-44.png)